_edited_edited.png)

Project

Creative Ai+

Role

Product Designer

Contributors

Design Manager, Product Managers, UX Researchers, Developers

Responsibilities

Enable the ability for users to be able to generate and edit images based on text prompts

Problem

Advertisers in the platform lacked AI-powered tools to generate or edit visuals images. Without this key feature, users had to rely simply on external tools outside of Sprinklr to produce campaign imagery, which essentially slows down the campaign creation and harder to quickly test and iterate on visuals.

Answer

For advertisers utilizing our module, they are looking to generate realistic ads to capture their respective users. They would like to do so by either following a channel, post, or placement specific custom prompt or provide a reference image from their repositories to the generator as reference.

As a user

-

I should be able to generate a series of images based on the text prompt

-

Generate a new variants of images based on the same text prompt

-

Add a reference image

-

Able to add effects to an image

User research

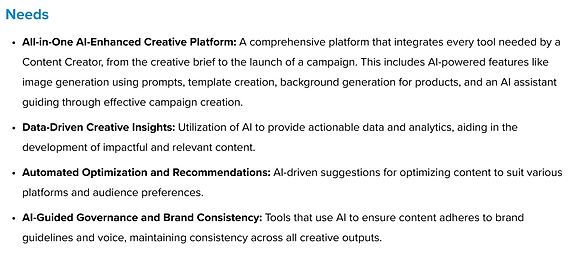

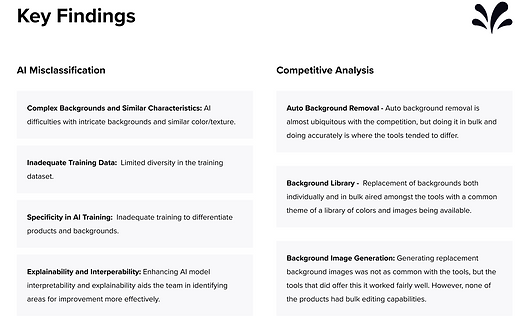

Collaborated with our UX Research team on understanding the user personas and diving deeper into competitive research and it's primary goal to evaluate and compare the UX/UI of leading text-to-image AI platforms (Microsoft Designer, Canva, Adobe Express). The research was meant to not only dive into primary and secondary use cases, but to identify main personas like Content Creators, and seek to uncover potential new user groups. The insights found culminated actionable recommendations, targeting both functionality and UX enhancements to help facilitate the integration of DALL-E into Sprinklr products.

.png)

.png)

.png)

.png)

.png)

Journey map

Created a journey map to understand the users workflow they would follow to generating images based off a prompt.

Design explorations

I began working on design explorations that are potential avenues we can look at when creating this new AI feature into our platform. Our first high fidelity design aims to solve the purpose of enhancing our Sprinklr AI+ tool by allowing users to generate and edit images for products in bulk, making the process efficient and time-saving.

After receiving feedback from internal stakeholders, we needed to explore the option of showcasing the AI prompt inside the modal during creation. This is how users are beginning there workflow in Sprinklr, of either selecting an existing image or utilizing the AI functionality.

.png)

.png)

Final designs

After explorations, challenges, and feedback received, we finalized the designs for our Creative Ai+ that answers the use cases, requirements, and aligned all stakeholders, providing an exceptional user experience that is enjoyable and easy to use. Click through the slides to view the UX and see the capabilities our Ai+ has.

Challenges

As a team we faced an array of challenges that helped us learn, adapt, and collaborate to create the best user experience possible. Some challenges that were faced throughout this process were:

Design iterations

Building out an entire new product for Ai, we had to go through design iterations, daily syncs to make sure it aligned with all teams (Leadership, Product Management, Developers)

Dev constraints

One challenge that we needed to keep in mind was model limitations and prompt understanding. With the timeframe we had to work with and working with developers on what can be implemented, we had to change the scope on some features (generative fill), as this would take more time to support this feature.

UI Elements

With this new feature we were designing into our platform, we had faced challenges on how the UI elements were going to appear and checking our components and coloring met guidelines.

What did I learn?

-

Generating prompt-based systems need user experience guidance, when new users come in to utilize this AI feature for the first time its imperative to incorporate examples, contextual hints to help reduce friction and improve the outcomes our users are looking to achieve.

-

Strong cross-functional collaboration was key. Working closely with my product managers, ux researchers, and developers helped define technical guardrails, and include helpful tools that shapes the user experience to be intuitive and user-friendly.

If i had more time, I would look into finding a way to introduce brand style guidelines that helps users stay inline with what standards they need to follow within their brand, as well as looking to push innovation and introduce video generation into the AI capabilities.

Next Steps

As we look to build out Ai into many other modules within our platform, the next steps within the Creative Ai framework is to collaborate with my Product Manager and Developer to gain any metrics related to feature adoption, efficiency gains, and output quality. This will help gauge on what is needed to improve the product, and to continue to improve the experience to our users.